The text you just read online? There is a reasonable chance a human did not write it. AI content has flooded search results, academic platforms, and professional inboxes so fast that most people have no reliable system for catching it.

This article is for editors, teachers, content managers, and curious readers who want an honest, practical framework for spotting AI writing in 2026. Not a list of buzzwords. A real approach.

AI detectors get a lot of credit they have not earned. The tools are useful, but the confidence scores they display can seriously mislead people making high-stakes decisions about a piece of content.

That is the gap most guides skip entirely, so let us start there.

Why Spotting AI Writing Is Harder Now Than It Was Two Years Ago

The models producing AI content have improved dramatically since 2023.

GPT-4 level outputs and their successors now handle tone variation, emotional nuance, and structural complexity in ways that earlier versions could not.

That means the obvious tells, the robotic cadence, the hollow phrasing, are far less reliable as detection signals than they were even eighteen months ago.

My take is that the real problem is not the AI getting smarter. It is that most people's detection habits have not kept up with the times. They are still looking for the 2023 version of AI text while reading 2026 outputs.

The Signs That Still Hold Up

Some patterns have stuck around despite model improvements. These are not guarantees, but they are useful starting filters.

Overly consistent tone throughout an entire piece is one of the most persistent signals. Human writers drift. Energy changes, sentence rhythm shifts, opinions sharpen or soften mid-piece.

AI outputs tend to maintain a smooth, calibrated register from opening to close.

Missing specificity on subjective claims also tends to appear. A review that praises a film's "emotional depth" without naming a single scene, character moment, or line of dialogue is worth scrutinizing.

AI summarizes. Humans usually reach for the specific thing that stuck with them.

Other patterns worth flagging:

- Circular reasoning in explanations, where the definition of a thing is essentially restated as its function

- Sources are attributed vaguely, with phrases like "research suggests" or "experts agree," but no actual citation trail

- Claims that are technically accurate but feel assembled rather than argued

- Lists that appear in places where a human writer would have just written a sentence or two

Ambiguity in first-person statements is another tell. When a piece says "I found this useful" without connecting that claim to any specific use, scenario, or outcome, that reads like a simulation of experience rather than an account of it.

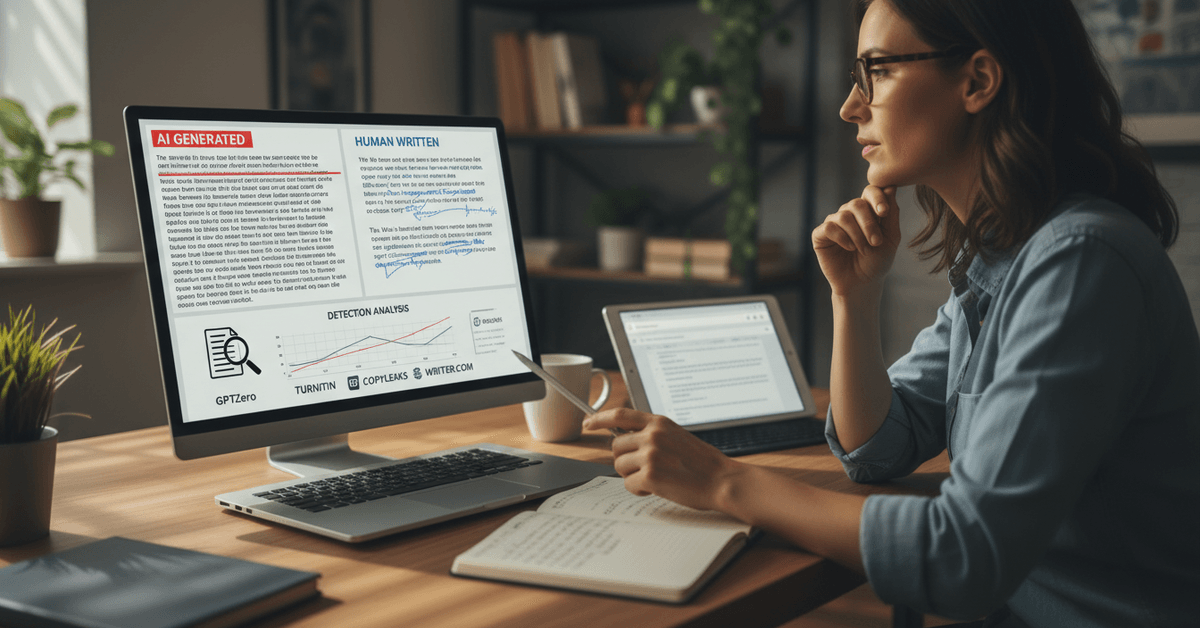

The Tool Landscape in 2026 and What Each One Actually Does

The major AI detection platforms have matured considerably. Each takes a different approach, and understanding the difference matters more than just picking the one with the highest rating.

| Tool | Best Use Case | Key Limitation |

|---|---|---|

| GPTZero | Academic essay screening | Struggles with heavily edited AI drafts |

| Turnitin AI Detection | Institutional academic workflows | Requires existing Turnitin integration |

| Copyleaks AI Detector | Editorial and publishing teams | Probability scores vary by content genre |

| Writer.com AI Detector | Enterprise batch content review | Built more for marketing teams than education |

| QuillBot AI Detector | Quick spot checks on short content | Less reliable on longer documents |

The takeaway: no single tool dominates across all content types. Match the tool to your context, not to whichever product came up first in your search.

Why False Positives Are a Bigger Problem Than People Admit

I was skeptical about the false positive rates for AI detectors until I read research flagging highly technical human writing as AI-generated at rates above 15% in some genre categories. Instruction manuals.

Academic papers in STEM fields. Legal documents. These formats mirror AI output patterns because they prioritize clarity and precision over personality.

That matters enormously. A professor who relies entirely on a detector to flag a student's STEM essay is making a judgment call based on a flawed signal.

The ethical failure mode is not catching AI writing. It is punishing human writers for writing in a genre that happens to resemble machine output.

How to Build a Detection System That Does Not Break Under Pressure

Tools are one layer. But a reliable detection system needs more than one layer.

- Start with context, not software. What do you know about this piece before you read a word? Who supposedly wrote it? Do they have a writing history? Does the voice match previous work? A good editor asks these questions before reaching for a detector.

- Sample, do not dump. Copying an entire 2,000-word article into a detector gives you a blended probability score that can obscure the useful signal. Copy three separate paragraphs, from the opening, the middle, and the closing argument. Compare those scores. AI content that has been lightly human-edited often shows up in the unedited middle sections.

- Use Google reverse search on unusual phrases. AI systems generate text probabilistically, which means they sometimes produce near-identical phrasing across separate outputs. A phrase that sounds slightly off but searches cleanly across multiple unrelated sites is a soft red flag.

Ask these questions directly about any piece you are evaluating:

- Does this article contain a specific example that could only come from lived attention to the subject?

- Does the author's stated position shift even once, and is there a visible reason for the shift?

- Are there any moments of genuine uncertainty, where the writer admits a limit or changes direction mid-thought?

These are not questions AI writing can consistently answer yes to.

The Detection Gap Nobody Talks About

I think the most underappreciated detection challenge in 2026 is collaborative content: a human writer who uses AI to generate a draft and then rewrites 40 to 60 percent of it. This output is genuinely hybrid.

A human contributed judgment, selection, and voice. An AI contributed structure and volume.

Current detection tools score this as partially AI-generated, which is technically true and practically useless. Knowing a piece is "35% likely AI" tells you almost nothing about whether the ideas, arguments, or conclusions are original.

The actual question worth asking is not "was AI involved?" It is "are the ideas, arguments, and claims original?" Those are different questions, and most detection tools are not set up to answer the second one.

Questions People Ask About Detecting AI-Generated Text

Q: Can AI detectors reliably catch content from newer AI models like GPT-4o or Claude Sonnet? Detector accuracy drops with newer models because the outputs are more varied and less pattern-predictable. Most current tools were trained primarily on earlier model outputs, which means they may give lower confidence scores on sophisticated 2025-2026 generation even when the content is fully AI-produced.

Q: Is it legal to use AI detection tools on someone else's content without their knowledge? Most commercial detectors process text without storing it permanently, but privacy policies vary significantly by platform. Running a public article through a detector carries different ethical weight than analyzing a private submission, and institutional policies on this are still catching up.

Q: What should I do if a detector flags my own human-written content as AI? Document your writing process if you can: drafts, notes, timestamps, source materials. Many academic institutions now accept process documentation as evidence of human authorship, especially for technical or data-heavy writing that naturally triggers detection patterns.

Q: Do paraphrasing tools like QuillBot help AI content evade detection? They can lower detection probability scores, but they do not eliminate the structural patterns that human reviewers spot. Running paraphrased AI content through multiple detectors and then applying the manual review checklist above will often reveal inconsistencies that a single tool misses.

Q: Are there genres where AI detection is essentially unreliable? Technical documentation, legal writing, and certain academic disciplines produce human outputs that consistently score as AI-generated. Detection in these genres should rely far more on contextual and sourcing analysis than on automated probability scores.

Conclusion

The best defense against AI content is a reader who knows what human thought actually looks like on the page.

That is a skill worth developing, and it compounds over time. Start asking harder questions of the text you consume, and the answers will start becoming obvious faster than any tool can flag them.